Alby, Cynthia. “

ChatGPT: A Must-See Before the Semester Begins.”

Faculty Focus, 9 Jan. 2023.

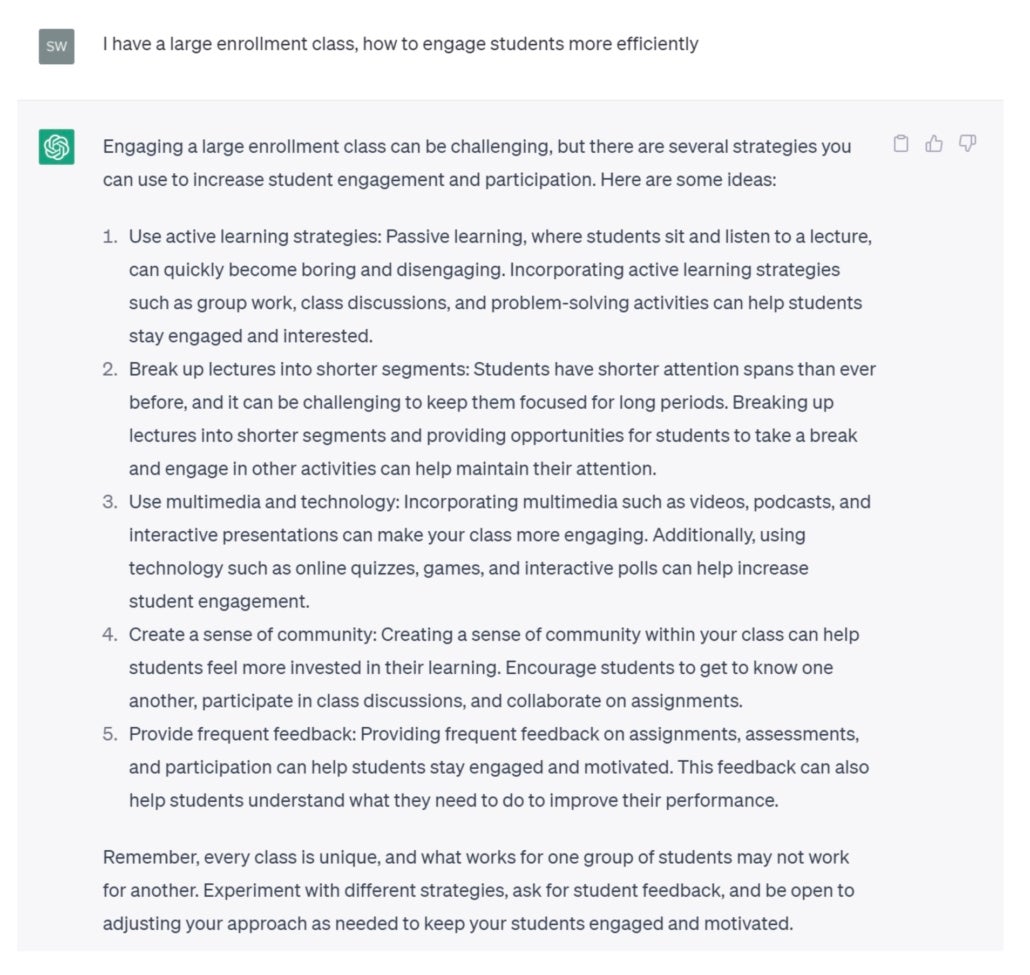

“While foundational knowledge is required for higher-order thinking, we often focus primarily or almost exclusively on the foundational. In this new paradigm, we would point students toward the appropriate modules to develop that foundational knowledge, and we’d move students as soon as possible into problem/project/case-based learning, much of it personalized and experiential or field-based. We would be mostly working with, working alongside, facilitating and supporting, and letting AI do some of the heavy lifting.”

“In this special Future Trends Forum session we’ll collectively explore this new technology. How does the chatbot work? How might it reshape academic writing? Does it herald an age of AI transforming society, or is it really BS? Experts who joined us on stage includes Brent A. Anders, Rob Fentress, Philip Lingard, John Warner, Jess Stahl, and Anne Fensie.”

“Last week we hosted a session on this topic. Demand was so great for it, and so many questions remained, that we followed up right away with a sequel. What do we know about how the chatbot works? Does ChatGPT pose an existential threat to higher education, or instead offer new ways of teaching, learning, and researching?”

Barre, Betsy. “

Will ChatGPT Make Us Better, Happier Teachers?”

Center for the Advancement of Teaching, 20 Jan. 2023.

“For all of these reasons, we should proceed with caution. But used wisely, ChatGPT may actually make our teaching more rather than less humane. By using AI to streamline our analytic tasks, we can devote more time to fostering deeper connections with our students – connections that not only benefit them, but also serve as a much-needed source of rejuvenation for educators who have been stretched thin by years of teaching during a pandemic. In this sense, ChatGPT can be seen as a gift – a tool that can help us reconnect with our students and reignite our passion for teaching.”

Basbøll, Thomas. “

Examining the Moment.”

Inframethodology, 14 Dec. 2022.

“In this post, I will suggest a form of examination that I consider essentially ideal, even if we had no worries about plagiarism or artifical intelligence, but one that the increasingly sophisticated technologies in this area now make virtually necessary. That is, I’m hopeful that the fact that the take-home assignment no longer constitutes a serious test of the student’s knowledge of a subject or ability to write about it will force us to adopt a form of testing that was always much more serious.”

Bowers-Abbott, Miriam. “

What Are We Doing About AI Essays?”

Faculty Focus, 4 Jan. 2023.

“A few experiments with online AI software services suggest some ways to address AI essay cheating, and interventions will require refining and revisiting course prompts.”

“Edward Tian, a 22-year-old senior at Princeton University, has built an app to detect whether text is written by ChatGPT”

I hope these three observations are useful as you make sense of this new technology landscape. Here they are again for easy reference:

- We are going to have to start teaching our students how AI generation tools work.

- When used intentionally, AI tools can augment and enhance student learning, even towards traditional learning goals.

- We will need to update our learning goals for students in light of new AI tools, and that can be a good thing.

Caines, Autumm. “

ChatGPT and Good Intentions in Higher Ed.”

Is a Liminal Space, 29 Dec. 2022.

“I am skeptical of the tech inevitability standpoint that ChatGPT is here and we just have to live with it. The all out rejection of this tech is appealing to me as it seems tied to dark ideologies and does seem different, perhaps more dangerous, than stuff that has come before. I’m just not sure how to go about that all out rejection. I don’t think trying to hide ChatGPT from students is going to get us very far and I’ve already expressed my distaste for cop shit. In terms of practice, the rocks and the hard places are piling up on me.”

“Australia’s leading universities say redesign of how students are assessed is ‘critical’ in the face of a revolution in computer-generated text”

“Before alarm spreads about the impact on student learning, let us consider the historical value of technological advances in education. The calculator, once banned in classrooms, is now a common sight on school supply lists and in the college classroom. Instructors use calculators to explore deeper connections with mathematical concepts and instead of limiting their use, can be more intentional about how they are used to encourage critical thinking among students. Similarly, ChatGPT and other AI technologies are here to stay, and we hope that academics will actively participate in decisions around their use and integration in higher education. We can influence how ChatGPT and other AI tools might be brought into higher education to assist students in developing things like critical thinking and executive function skills.”

Cummings, Robert. “

AI Writing Technologies Will Force Instructors to Adapt.”

Chronicle of Higher Education, 19 Sept. 2022.

“These new AI-powered writing generation technologies are going to change college writing substantially. But they won’t end college writing. Instead, we’re going to need to create some new guard rails for the assumptions we make about writing assignments in higher education. What will that future look like?”

D’Agostino, Susan. “

ChatGPT Advice Academics Can Use Now.”

Inside Higher Ed, 12 Jan. 2023.

“To harness the potential and avert the risks of OpenAI’s new chat bot, academics should think a few years out, invite students into the conversation and—most of all—experiment, not panic.”

D’Agostino, Susan. “

AI Writing Detection: A Losing Battle Worth Fighting.”

Inside Higher Ed, 20 Jan. 2023.

“Human- and machine-generated prose may one day be indistinguishable. But that does not quell academics’ search for an answer to the question ‘What makes prose human?'”

“Educators can dig in their heels, attempting to lock down assignments and assessments, or use this new technology to imagine what comes next.”

“This resource is created by Lance Eaton for the purposes of sharing and helping other instructors see the range of policies available by other educators to help in the development of their own for navigating AI-Generative Tools (such as ChatGPT, MidJourney, Dall-E, etc).”

“if you are afraid of an explosion of cheating in your classes because of ChatGPT or any other new technological advance, you are not alone, but honestly, technology isn’t the problem. Stay tuned for more . . .”

eBildungslabor-Blog. “

Einordnung und Nutzung von KI in der Bildung.”

eBildungslabor, 11 Dec. 2022.

“My suggestion: In education, tools of so-called artificial intelligence can best be classified as a further development of search engines.” [from original using Google Translate]

Feldstein, Michael. “

I Would Have Cheated in College Using ChatGPT.”

E-Literate, 16 Dec. 2022.

“I can see the shape of a pedagogical process—and preferably a supporting end-to-end tool—that teaches many of the skills involved with good writing, including some hard ones like checking sources and editing—while including some elements of creativity. If it is scaffolded properly—again, with the right tool and process but also with a good, solid rubric—it could enable educators to spend more of their time honing in on specific aspects of the writing process with less drudgery.”

“This paper shares results from a pedagogical experiment that assigns undergraduates to “cheat” on a final class essay by requiring their use of text-generating AI software.”

“We need to embrace these tools and integrate them into pedagogies and policies. Lockdown browsers, strict dismissal policies and forbidding the use of these platforms is not a sustainable way forward.”

Groves, Mike. “

If You Can’t Beat GPT3, Join It.”

Times Higher Education, 16 Dec. 2022

“Assessment is also affected. To me, it seems anachronistic to prepare students for an academic world where online translation does not exist. If we are preparing them to write essays and reports that can be supported by online translation, we should allow them to develop these competencies as part of the assessment process.”

“there’s another fix—one that might have been worth implementing even before the arrival of ChatGPT: Make students write out essays by hand. Apart from outflanking the latest AI, a return to handwritten essays could benefit students in meaningful ways.”

Heikkilä, Melissa. “

How AI-Generated Text Is Poisoning the Internet.”

MIT Technology Review, 20 Dec. 2022.

“The proliferation of these easily accessible large language models raises an important question: How will we know whether what we read online is written by a human or a machine? I’ve just published a story looking into the tools we currently have to spot AI-generated text. Spoiler alert: Today’s detection tool kit is woefully inadequate against ChatGPT. “

Herman, Daniel. “

The End of High-School English.”

The Atlantic, 9 Dec. 2022.

“If you’re looking for historical analogues, this would be like the printing press, the steam drill, and the light bulb having a baby, and that baby having access to the entire corpus of human knowledge and understanding. My life—and the lives of thousands of other teachers and professors, tutors and administrators—is about to drastically change.”

“Mr. Aumann decided to transform essay writing for his courses this semester. He plans to require students to write first drafts in the classroom, using browsers that monitor and restrict computer activity. In later drafts, students have to explain each revision. Mr. Aumann, who may forgo essays in subsequent semesters, also plans to weave ChatGPT into lessons by asking students to evaluate the chatbot’s responses.”

Jarry, Jonathan. “

I Chatted with an Artificial Intelligence about Quackery.”

McGill University Office for Science and Society, 16 Dec. 2022.

“I have done research on microRNAs in the past, but it can be challenging to come up with an easy-to-understand elevator pitch of what a microRNA is and what it does in the body. So I typed into ChatGPT the following request: explain what microRNAs are and what they do at the reading level of a high school freshman. The answer started to appear a few seconds later, one word at a time, as if the complex computer program was typing it out. And it was a good answer!”

Kelley, Kevin Jacob. “

Teaching Actual Student Writing in an AI World.”

Inside Higher Ed, 19 Jan. 2023.

“I have tested my assignments against multiple AI programs as a faculty member and Writing Across the Curriculum director. I may incorporate this technology in future courses, but for now, here are my 10 strategies that prevent the use of AI by students.”

“Moving forward, we’ll need to think of ways AI can be used to support teaching and learning, rather than disrupt it. Here are three ways to do this.”

Krause, Author Steve. “

AI Can Save Writing by Killing ‘The College Essay.‘”

Steven D. Krause, 10 Dec. 2022.

“Anyway, the point I’m trying to make here (and this is something that I think most people who teach writing regularly take as a given) is that there is a big difference between assigning students to write a “college essay” and teaching students how to write essays or any other genre.”

Lametti, Daniel. “

A.I. Could Be Great for College Essays.”

Slate, 7 Dec. 2022.

“In 2014, a department of the U.K. government published a study of history and English papers produced by online-essay writing services for senior high school students. Most of the papers received a grade of C or lower. Much like the work of ChatGPT, the papers were vague and error-filled. It’s hard to write a good essay when you lack detailed, course-specific knowledge of the content that led to the essay question.”

“The willingness to learn is related to the growth mind-set—the belief that your abilities are not fixed but can improve. But there is a key difference: This willingness is a belief not primarily about the self but about the world. It’s a belief that every class offers something worthwhile, even if you don’t know in advance what that something is. Unfortunately, big economic and cultural obstacles stand in opposition to that belief.”

Marche, Stephen. “

The College Essay Is Dead.”

The Atlantic, 6 Dec. 2022.

“The essay, in particular the undergraduate essay, has been the center of humanistic pedagogy for generations. It is the way we teach children how to research, think, and write. That entire tradition is about to be disrupted from the ground up. Kevin Bryan, an associate professor at the University of Toronto, tweeted in astonishment about OpenAI’s new chatbot last week: ‘You can no longer give take-home exams/homework … Even on specific questions that involve combining knowledge across domains, the OpenAI chat is frankly better than the average MBA at this point. It is frankly amazing.’ Neither the engineers building the linguistic tech nor the educators who will encounter the resulting language are prepared for the fallout.”

McMurtrie, Beth. “

AI and the Future of Undergraduate Writing.”

The Chronicle of Higher Education, 13 Dec. 2022.

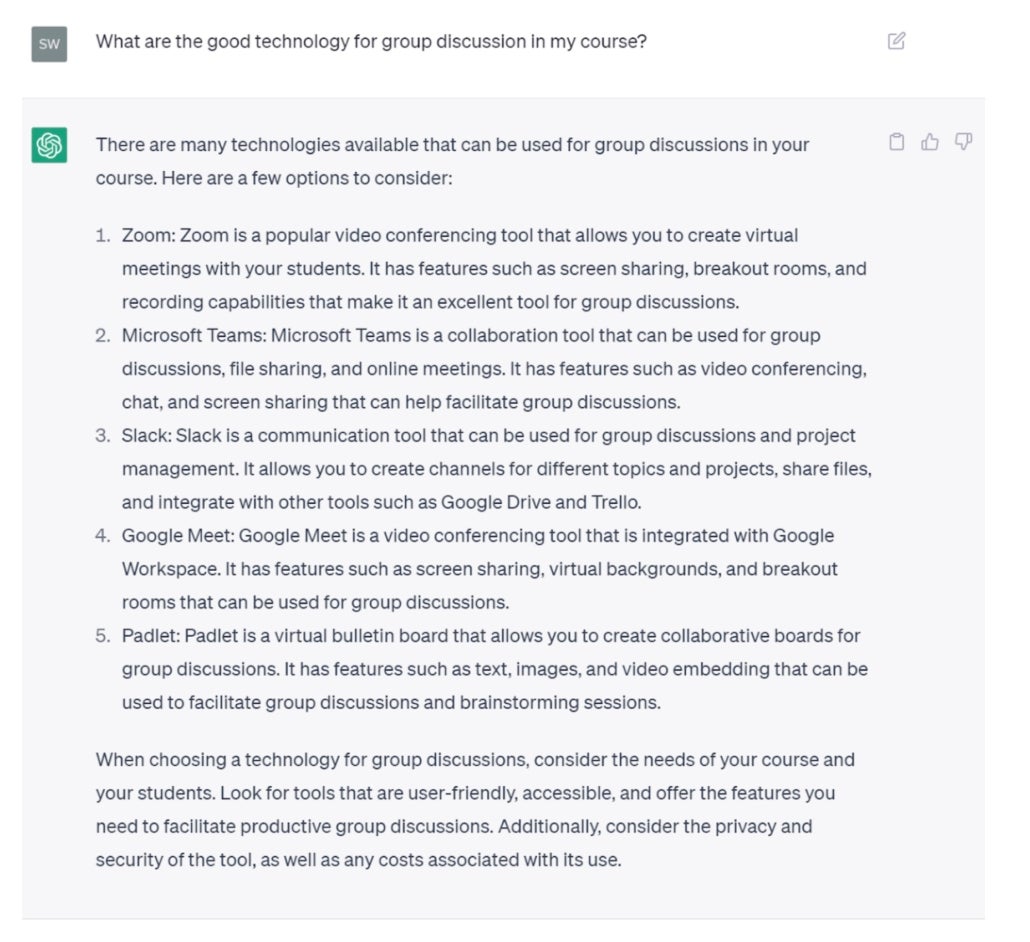

“Scholars of teaching, writing, and digital literacy say there’s no doubt that tools like ChatGPT will, in some shape or form, become part of everyday writing, the way calculators and computers have become integral to math and science. It is critical, they say, to begin conversations with students and colleagues about how to shape and harness these AI tools as an aid, rather than a substitute, for learning.”

“If we want to dissuade students from using artificial intelligence to help produce their writing, we need to treat writing differently. If we want to teach writing in our classes, if we want students to use writing as a deliberative, reflective space to facilitate critical thinking, innovation, and self-awareness, we need to move away from framing writing assignments as primarily product-based endeavors.”

“The stakes are high. Many teachers agree that learning to write can take place only as students grapple with ideas and put them into sentences. Students start out not knowing what they want to say, and as they write, they figure it out. ‘The process of writing transforms our knowledge,’ said Joshua Wilson, an associate professor in the School of Education at the University of Delaware. ‘That will completely get lost if all you’re doing is jumping to the end product.'”

“AI just stormed into the classroom with the emergence of ChatGPT. How do we teach now that it exists? How can we use it? Here are some ideas.”

Compiled for the Writing Across the Curriculum Clearinghouse as part of a larger resource collection: “AI and Teaching Writing: Starting Points for Inquiry.” This is an open and evolving list put together by a writing teacher who is not an expert in the field, with suggestions from a few other more knowledgeable folks.

Mintz, Steven. “

AI Unleashed.”

Inside Higher Ed, 12 Dec. 2022.

“As a historian I should be cautious and should beware of frenetic enthusiasm. We know all too well that highly touted technologies, like the blockchain, frequently fail to live up to the hype. So let me echo the Lincoln Steffens’s words after visiting the Soviet Union in 1919, fully aware that the phrase is fraught with irony: “I have seen the Future and it works.'”

Mintz, Steven. “

Breaking Free From Higher Ed’s Iron Triangle.”

Inside Higher Ed, 18 Jan. 2023.

“Yes, we can control costs, reduce performance gaps and improve learning outcomes without sacrificing quality or rigor.”

Mintz, Steven. “

ChatGPT: Threat or Menace?”

Inside Higher Ed, 16 Jan. 2023.

“The threat now is to the very knowledge workers who many assumed were invulnerable to technological change. If we fail to instill within our students the advanced skills and expertise that they need in today’s rapidly shifting competitive landscape, they too will be losers in the unending contest between technological innovation and education.”

Mitrano, Tracy. “

Coping With ChatGPT.”

Inside Higher Ed, 17 Jan. 2023.

“Mis- and disinformation is newly comprising about a third of the material of this new course. For the last couple of semesters, I have been inching my way toward including that topic. Given the political landscape globally as well as in the United States, that topic could, and should, be its own course. Artificial intelligence will certainly play a role in that sphere as it emerges in all significant walks of life; take a look, for example, at the essay Bruce Schneier published just yesterday in The New York Times on the subject of lobbying and political influence. We must deal with it. Panic will not help.”

“Chatbots are able to produce high-quality, sophisticated text in natural language. The authors of this paper believe that AI can be used to overcome three barriers to learning in the classroom: improving transfer, breaking the illusion of explanatory depth, and training students to critically evaluate explanations. The paper provides background information and techniques on how AI can be used to overcome these barriers and includes prompts and assignments that teachers can incorporate into their teaching. The goal is to help teachers use the capabilities and drawbacks of AI to improve learning.”

Mollick, Ethan. “

All My Classes Suddenly Became AI Classes.”

One Useful Thing (And Also Some Other Things), 17 Jan. 2023.

“All of my classes have become AI classes. And I wanted to share with you the experiments I am running to integrate AI into class (I will update you later in the semester about how they are going).”

“A look at OpenAI’s ChatGPT and how teachers in medieval studies can prevent their students from using it.”

Montclair State. “

Practical Responses to ChatGPT.”

Montclair State University Office for Faculty Excellence.

“ChatGPT is not without precedent or competitors (such as Jasper, Sudowrite, QuillBot, Katteb, etc). Souped-up spell-checkers such as Grammarly, Hemingway, and Word and Google-doc word-processing tools precede ChatGPT and are often used by students to review and correct their writing. Like spellcheck, these tools are useful, addressing spelling, usage, and grammar problems, and some compositional stylistic issues (like overreliance on passive voice). However, they can also be misused when writers accept suggestions quickly and thus run the danger of accepting a poor suggestion. Automation bias is in effect—we often trust an automated suggestion more than we trust ourselves. Further, over-reliance can mean students simply miss opportunities to grow and develop as writers.”

Nerantzi, Chrissi, Sandra Abegglen, Marianna Karatsiori, & Antonio Martínez-Arboleda (Eds.). (2023).

“101 Creative Ideas to use AI in Education, A Crowdsourced Collection”

Zenodo, 23 June 2023

“This open crowdsourced collection by #creativeHE presents a rich tapestry of our collective thinking in the first months of 2023 stitching together potential alternative uses and applications of Artificial Intelligence (AI) that could make a difference and create new learning, development, teaching and assessment opportunities.”

Roose, Kevin, et al. “

Don’t Ban ChatGPT in Schools. Teach with It.”

The New York Times, 12 Jan. 2023.

“Even ChatGPT’s flaws—such as the fact that its answers to factual questions are often wrong—can become fodder for a critical thinking exercise. Several teachers told me that they had instructed students to try to trip up ChatGPT, or evaluate its responses the way a teacher would evaluate a student’s.”

Roose, Kevin, et al. “

ChatGPT Transforms a Classroom and Is ‘M3GAN’ Real?”

The New York Times, 13 Jan. 2023.

“A high school teacher on how the new chatbot from OpenAI is transforming her classroom—for the better.”

Schatten, Jeff. “

Will Artificial Intelligence Kill College Writing?”

The Chronicle of Higher Education, 14 Sept. 2022.

“The current iteration of GPT-3 has its quirks and limitations, to be sure. Most notably, it will write absolutely anything. It will generate a full essay on “how George Washington invented the internet” or an eerily informed response to “10 steps a serial killer can take to get away with murder.” In addition, it stumbles over complex writing tasks. It cannot craft a novel or even a decent short story. Its attempts at scholarly writing—I asked it to generate an article on social-role theory and negotiation outcomes—are laughable. But how long before the capability is there? Six months ago, GPT-3 struggled with rudimentary queries, and today it can write a reasonable blog post discussing ‘ways an employee can get a promotion from a reluctant boss.'”

Schiappa, Edward, and Nick Montfort. “

Advice Concerning the Increase in AI-Assisted Writing.”

Post Position, 10 Jan. 2023.

From two MIT professors: “The use of AI/LLM text generation is here to stay. Those of us involved in writing instruction will need to be thoughtful about how it impacts our pedagogy. … We also believe students are here at MIT to learn, and will be willing to follow thoughtfully advanced policies so they can learn to become better communicators. To that end, we hope that what we have offered here will help to open an ongoing conversation.”

“AI tools are available today that can write compelling university level essays. Taking an example of sample essay produced by the GPT-3 transformer, Mike Sharples discusses the implications of this technology for higher education and argues that they should be used to enhance pedagogy, rather than accelerating an ongoing arms race between increasingly sophisticated fraudsters and fraud detectors.”

“As a lawyer who represents students accused of cheating, ChatGPT worries me. If we want to maintain the credibility of our universities and the weight of a degree, we must get back to in-person assessments.”

“In the upcoming years, we’ll need to think about how we can help students develop these critical human skills. It might be inquiry-based learning or project-based learning. But it might also be game-based learning. It might be a lo-fi makerspace. It might be an epic, face-to-face science lab or a sketchnote video, or an interview with community members. Notice that none of these ideas are new. These are the things teachers are already doing when they empower their students with voice and choice. So am I nervous about AI? Absolutely. But am I hopeful? Most definitely. Because I know that teachers will always be at the heart of innovation.”

“Cynthia Alby discusses how artificial intelligence (like ChatGPT) is impacting higher education on episode 448 of the Teaching in Higher Ed podcast.”

Strang, Brian. “

My First Chat with the Bot.”

Inside Higher Ed, 12 Jan. 2023.

“Uncanny, creepy and bland: Brian Strang reflects on his chat with the artificial intelligence language model ChatGPT and the threat it does (or doesn’t) pose to writing instruction.”

Thompson, Ben. “

AI Homework.”

Stratechery by Ben Thompson, 5 Dec. 2022.

“It is an open question as to what jobs will be the first to be disrupted by AI; what became obvious to a bunch of folks this weekend, though, is that there is one universal activity that is under serious threat: homework.”

“USC President Carol Folt is announcing a new Center for Generative AI and Society to explore the transformative impact of artificial intelligence on culture, education, media and society.”